plex

Posts 7

Comments59

Whether AI would wipe out humans entirely is a separate question (and one which has been debated extensively, to the point where I don't think I have much to add to that conversation, even if I have opinions)

What I'm arguing for here is narrowly: Would AI which wipes out humans leave nature intact? I think the answer to that is pretty clearly no by default.

(cross posting my reply to your cross-posted comment)

I'm not arguing about p(total human extinction|superintelligence), but p(nature survives|total human extinction from superintelligence), as this conditional probability I see people getting very wrong sometimes.

It's not implausible to me that we survive due to decision theoretic reasons, this seems possible though not my default expectation (I mostly expect Decision theory does not imply we get nice things, unless we manually win a decent chunk more timelines than I expect).

My confidence is in the claim "if AI wipes out humans, it will wipe out nature". I don't engage with counterarguments to a separate claim, as that is beyond the scope of this post and I don't have much to add over existing literature like the other posts you linked.

AI Safety Support has been for a long time a remarkably active in-the-trenches group patching the many otherwise gaping holes in the ecosystem (someone who's available to talk and help people get a basic understanding of the lie of the land from a friendly face, resources to keep people informed in ways which were otherwise neglected, support around fiscal sponsorship and coaching), especially for people trying to join the effort who don't have a close connection to the inner circles where it's less obvious that these are needed.

I'm sad to see the supporters not having been adequately supported to keep up this part of the mission, but excited by JJ's new project: Ashgro.

I'm also excited by AI Safety Quest stepping up as a distributed, scalable, grassroots version of several of the main duties of AI Safety Support, which are ever more keenly needed with the flood of people who want to help as awareness spreads.

running a big AI Alignment conference

Would you like the domain aisafety.global for this? It's one of the ones I collected on ea.domains which I'm hoping someone will make use of one day.

Disagree with example. Human teenagers spend quite a few years learning object recognition and other skills necessary for driving before driving, and I'd bet at good odds that a end-to-end training run of a self-driving car network is shorter than even the driving lessons a teenager goes through to become proficient at a similar level to the car. Designing the training framework, no, but the comparator there is evolution's millions of years so that doesn't buy you much.

Your probabilities are not independent, your estimates mostly flow from a world model which seem to me to be flatly and clearly wrong.

The plainest examples seem to be assigning

| We invent a way for AGIs to learn faster than humans | 40% |

| AGI inference costs drop below $25/hr (per human equivalent) | 16% |

despite current models learning vastly faster than humans (training time of LLMs is not a human lifetime, and covers vastly more data) and the current nearing AGI and inference being dramatically cheaper and plummeting with algorithmic improvements. There is a general factor of progress, where progress leads to more progress, which you seem to be missing in the positive factors. For the negative, derailment that delays enough to push us out that far needs to be extreme, on the order of a full-out nuclear exchange, given more reasonable models of progress.

I'll leave you with Yud's preemptive reply:

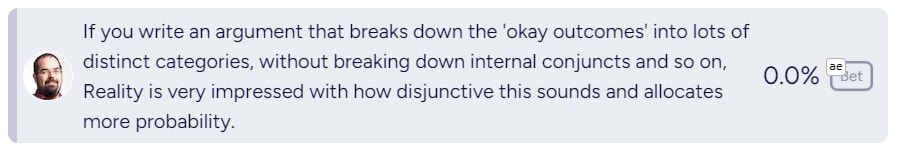

Taking a bunch of number and multiplying them together causes errors to stack, especially when those errors are correlated.

Nice! Glad to see more funders entering the space, and excited to see the S-process rolled out to more grantmakers.

Added you to the map of AI existential safety:

I don't claim it's impossible that nature survives an AI apocalypse which kills off humanity, but I do think it's an extremely thin sliver of the outcome space (<0.1%). What odds would you assign to this?