Welcome to the AI Safety Newsletter by the Center for AI Safety. We discuss developments in AI and AI safety. No technical background required.

Subscribe here to receive future versions.

Listen to the AI Safety Newsletter for free on Spotify.

AI Labs Fail to Uphold Safety Commitments to UK AI Safety Institute

In November, UK Prime Minister Rishi Sunak announced that leading AI labs and governments had committed to "work together on testing the safety of new AI models before they are released." But reporting from Politico suggests that these commitments have fallen through.

OpenAI, Anthropic, and Meta have all failed to share their models with the UK AISI before deployment. Only Google DeepMind, headquartered in London, has given pre-deployment access to UK AISI.

Anthropic released the most powerful publicly available language model, Claude 3, without any window for pre-release testing by the UK AISI. When asked for comment, Anthropic co-founder Jack Clark said, “Pre-deployment testing is a nice idea but very difficult to implement.”

When asked about their concerns with pre-deployment testing, Meta’s spokesperson argued that Meta is an American company and should only have to comply with American regulations, even though the US has signed agreements with the UK to collaborate on AI testing. Other lab sources mentioned the possibility of leaking intellectual property secrets, and the risk that safety testing could slow down model releases.

This is a strong signal that AI companies should not be trusted to follow through on safety commitments if those commitments conflict with their business interests. Because of the ongoing race among AI labs, all AI developers face pressure to keep up with their competitors at the expense of safety, even if they’re concerned that AI development poses catastrophic risks to humanity.

Fortunately, there are several ongoing efforts to turn voluntary commitments into legal requirements. The UK government said it plans to develop “targeted, binding requirements” to ensure safety in frontier AI development. In California, a bill is being considered which would require companies to self-certify that they’ve evaluated and mitigated catastrophic risks before releasing AI systems, and allow them to be sued for violations of this law. Given the fragility of voluntary commitments to AI safety, future policy work should aim to make those commitments legally binding.

For more on this story, check out the full Politico article here.

New Bipartisan AI Policy Proposals in the US Senate

US Senators introduced two new proposals for AI policy last week. One would establish a mandatory licensing system for frontier AI developers, while the other would encourage the development of AI evaluations and the adoption of voluntary safety standards.

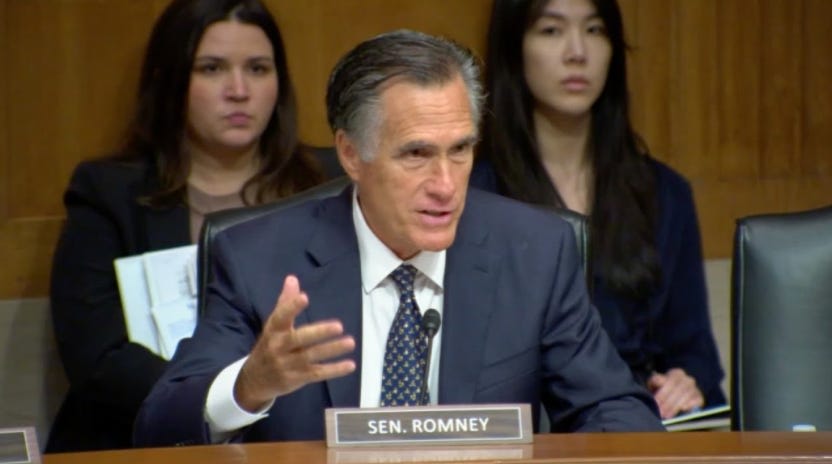

Mandatory licensing of frontier AI developers focused on catastrophic risks. Senators Mitt Romney (R-UT), Jack Reed (D-RI), Jerry Moran (R-KS), and Angus King (I-ME) unveiled a new policy framework that focuses exclusively on extreme risks from AI development.

Their letter to the Senate AI working group leaders outlines the evidence that AI systems could soon meaningfully assist in the development of biological, chemical, cyber, and nuclear weapons. They note that research on this topic has been recognized by the Department of Defense, Department of State, U.S. Intelligence Community, and National Security Commission on AI as demonstrating the extreme risks that AI could soon pose to national security and public safety.

Their policy framework would:

- Require licenses for training and deploying large-scale AI systems, as well as owning large stocks of high-performance computing hardware

- Apply to models trained with more than 1026 operations which are either general-purpose or intended for use in bioengineering, chemical engineering, cybersecurity, or nuclear development.

- Establish a new oversight entity. This could be within the Department of Commerce, within the Department of Energy, as an new interagency coordinating body, or as an entirely new agency.

On the whole, this framework would establish serious safeguards against catastrophic AI risks. This is a valuable contribution to ongoing discussions about US AI policy, and a reference point for a strong regulatory framework focused on extreme risks.

Establishing processes to track AI vulnerabilities, incidents, and supply chain risks. Senators Mark Warner (D-VA) and Thom Tillis (R-NC) have introduced the Secure Artificial Intelligence Act of 2024 to improve the tracking and management of security vulnerabilities and safety incidents associated with AI systems.

The bill's key provisions would:

- Require NIST to incorporate AI systems into the National Vulnerability Database (NVD), and require CISA to update the Common Vulnerabilities and Exposures (CVE) program or create a new process for tracking voluntarily reported AI vulnerabilities.

- Establish a public database for tracking voluntarily reported AI safety and security incidents.

- Create a multi-stakeholder process to develop best practices for managing AI supply chain risks during model training and maintenance.

- Task the NSA to establish an AI Security Center that provides an AI test-bed, develops counter-AI guidance, and promotes secure AI adoption.

This act lays important groundwork for improving the visibility, tracking, and collaborative management of AI safety risks as the capabilities and adoption of AI systems grows. It complements other legislative proposals focused on evaluations, standards, and oversight for higher-risk AI applications.

Establishing NIST AI Safety Institute to develop AI evaluations and voluntary safety standards. The Future of AI Innovation Act was introduced this week by Senators Maria Cantwell (D-WA), Todd Young (R-IN), John Hickenlooper (D-CO), and Marsha Blackburn (R-TN). It would formally establish the NIST AI Safety Institute with a mandate to develop AI evaluations and voluntary safety standards.

This bill would help advance the science of AI evaluations, paving the way for future policy that would require safety testing for frontier AI developers.

Military AI in Israel and the US

Militaries are increasingly interested in AI development. Here, we cover reports that Israel is using AI to identify targets for airstrikes in Gaza, and that the US has massively increased spending on military AI systems in this year’s budget.

Lavender, an AI system used by the Israeli military to identify airstrike targets. Earlier this month, Israeli news outlets +972 Magazine and Local Call reported on Israel’s use of military AI in the ongoing conflict in Gaza. They describe the system, known as “Lavender,” as follows:

The Lavender software analyzes information collected on most of the 2.3 million residents of the Gaza Strip through a system of mass surveillance, then assesses and ranks the likelihood that each particular person is active in the military wing of Hamas or PIJ. According to sources, the machine gives almost every single person in Gaza a rating from 1 to 100, expressing how likely it is that they are a militant.

Limited human oversight of military AI. Some argue that military AI systems should be monitored by a “human in the loop” to oversee its decisions and prevent errors. But this could put militaries at a competitive disadvantage, as fast-paced wartime environments might benefit from equally speedy AI decision-making, and human operators might make more errors than an AI system working alone.

Lavender appears to have limited human oversight. People set the high-level parameters, such as the tolerance for “collateral damage” of civilian deaths when targeting militants. Lavender then provides suggestions for airstrike targets, which a human operator reviews in a matter of seconds:

“A human being had to [verify the target] for just a few seconds,” B. said, explaining that this became the protocol after realizing the Lavender system was “getting it right” most of the time. “At first, we did checks to ensure that the machine didn’t get confused. But at some point we relied on the automatic system, and we only checked that [the target] was a man — that was enough. It doesn’t take a long time to tell if someone has a male or a female voice.”

Israel disputes the report. Responding to similar reports by The Guardian, the Israeli Defense Forces released a statement: “Contrary to claims, the IDF does not use an artificial intelligence system that identifies terrorist operatives or tries to predict whether a person is a terrorist.”

US investment in military AI skyrockets. There was a 1500% increase in the potential value of US Department of Defense contracts related to AI between August 2022 and August 2023, finds a new Brookings report. “In comparison, NASA and HHS increased their AI contract values by between 25% and 30% each,” the report notes. Overall, the report says that “DoD grew their AI investment to such a degree that all other agencies become a rounding error.”

DARPA uses AI to operate a plane in a dogfight. The Defense Advanced Research Projects Agency (DARPA) recently disclosed that an AI-controlled jet successfully engaged a human pilot in a real-world dogfight test last year. Although simulations involving AI are common, this event marks the first known instance of AI piloting a US Air Force aircraft under actual combat conditions. Although a safety pilot was on board, the safety switch was not activated at any point throughout the flight. Unlike earlier systems that relied on hardcoded AI instructions known as expert systems, this test used a machine learning-based system

Previous efforts to slow military AI adoption have had limited success. In the 2010s, the Campaign to Stop Killer Robots received widespread support from the public and leaders within the AI industry, as well as UN Secretary-General António Guterres. Yet the United States and other major powers resisted these calls, saying they would use AI responsibly in military context, but not supporting bans on the technology.

More recently, countries have sought smaller commitments that could bring military powers to agree. In November, there were rumors that a meeting between President Biden and President Xi would feature an agreement to avoid automating nuclear command and control with AI, but no commitment happened.

What policy commitments on military AI are both desirable and realistic? How can military leaders better understand AI risks, and develop plans for responsibly reducing those risks? So far, attempts to answer these questions have not yielded breakthroughs in military AI policy. More work will be needed to assess and respond to this growing risk.

New Online Course on AI Safety from CAIS

Applications are open for AI Safety, Ethics, and Society, an online course running July-October 2024. Apply to take part by May 31st.

The course is based on a new AI safety textbook by Dan Hendrycks, Director of the Center for AI Safety. It is aimed at students and early-career professionals who would like to explore the core challenges in ensuring that increasingly powerful AI systems are safe, ethical and beneficial to society.

The course is delivered via interactive small-group discussions supported by facilitators, along with accompanying readings and lecture videos. Participants will also complete a personal project to extend their knowledge. The course is designed to be accessible to a non-technical audience and can be taken alongside work or other studies.

Links

Model Updates

- Meta releases the weights of Llama 3, claiming it beats Google’s Gemini 1.5 Pro and Anthropic’s Claude 3 Sonnet. Mark Zuckerberg discussed the release here, including saying that he would consider not open sourcing models that significantly aid in biological weapons development.

- Anthropic’s Claude can now use tools such as browsing the internet and running code.

US AI Policy

- Paul Christiano will join NIST AISI as Head of AI Safety, along with others in the newly announced leadership team.

- NIST launches a new GenAI evaluations platform with a competition to see if AIs can distinguish between AI-written and human-written text.

- The NSA releases guidance on AI security, with recommendations on information security for model weights and plans for securing dangerous AI capabilities.

- The Biden administration is building an alliance with the United Arab Emirates on AI, encouraging tech companies to sign deals in the country. Microsoft invested $1.5B in an Abu Dhabi-based AI company.

- The Center for AI Safety co-led a letter signed by more than 80 organizations urging the Senate Appropriations Committee to fully fund the Department of Commerce’s AI efforts next year.

International AI Policy

- The UN proposes international institutions for AI, including a scientific panel on the topic.

- The UK is considering legislation on AI that would require leading developers to conduct safety tests and share information about their models with governments.

- Here’s a new reading list on China and AI.

Opportunities

- The UK’s ARIA is offering $59M in funding for proposals to better understand if we can combine scientific world models and mathematical proofs to develop quantitative safety guarantees for AI.

- The American Philosophical Association is offering $20,000 in prizes for philosophical research on AI.

- How can AI help develop philosophical wisdom? AI Impacts is running an essay contest with $25,000 in prizes for answers to that question.

- For those at ICLR, there will be an ML Safety Social. Register here.

- The Hill and Valley Forum on May 1 will host talks from tech CEOs, venture capitalists, and members of Congress. Apply to attend here.

- State Department issues a $2M RFP for improving cyber and physical security of AI systems.

Research

- “Future-Proofing AI Regulation,” a new report from CNAS, finds the cost of training an AI system of a given level of capabilities in the current paradigm fall by a factor of ~1000 over 5 years.

- The 2024 Stanford AI Index gathers data on important trends in AI.

- Two legal academics consider whether LLMs violate laws against writing school essays for pay.

- Theoretical computer science researchers argue that AIs could have consciousness.

- CSET released a new explainer on emergent abilities in LLMs.

Other

- AI systems are calling voters and speaking with them in the US and India.

- An interview with RAND CEO Jason Matheny on risks from AI and biotechnology.

- TIME hosts a discussion between Yoshua Bengio and Eric Schmidt on AI risks.

- The New York Times considers the challenge of evaluating AI systems, with comments from CAIS’s executive director.

- Tucker Carlson argues that if AI is harmful for humanity, then we shouldn’t build it.

See also: CAIS website, CAIS twitter, A technical safety research newsletter, An Overview of Catastrophic AI Risks, our new textbook, and our feedback form

Listen to the AI Safety Newsletter for free on Spotify.

Subscribe here to receive future versions.

I suspect Politico hallucinated this / there was a game-of-telephone phenomenon. I haven't seen a good source on this commitment. (But I also haven't heard people at labs say "there was no such commitment.")

I agree there's a surprising lack of published details about this, but it does seem very likely that labs made some kind of commitment to pre-deployment testing by governments. However, the details of this commitment were never published, and might never have been clear.

Here's my understanding of the evidence:

First, Rishi Sunak said in a speech at the UK AI Safety Summit: “Like-minded governments and AI companies have today reached a landmark agreement. We will work together on testing the safety of new AI models before they are released." An article about the speech said: "Sunak said the eight companies — Amazon Web Services, Anthropic, Google, Google DeepMind, Inflection AI, Meta, Microsoft, Mistral AI and Open AI — had agreed to “deepen” the access already given to his Frontier AI Taskforce, which is the forerunner to the new institute." I cannot find the full text of the speech, and these are the most specific details I've seen from the speech.

Second, an official press release from the UK government said:

Based on the quotes from Sunak and the UK press release, it seems very unlikely that the named labs did not verbally agree to "work together on testing the safety of new AI models before they are released." But given that the text of an agreement was never released, it's also possible that the details were never hashed out, and the labs could argue that their actions did not violate any agreements that had been made. But if that were the case, then I would expect the labs to have said so. Instead, their quotes did not dispute the nature of the agreement.

Overall, it seems likely that there was some kind of verbal or handshake agreement, and that the labs violated the spirit of that agreement. But it would be incorrect to say that they violated specific concrete commitments released in writing.

I suspect the informal agreement was nothing more than the UK AI safety summit "safety testing" session, which is devoid of specific commitments.

It seems weird that none of the labs would have said that when asked for comment?

Interesting. That seems possible, and if so, then the companies did not violate that agreement.

I've updated the first paragraph of the article to more clearly describe the evidence we have about these commitments. I'd love to see more information about exactly what happened here.